Future Model Runs#

Let us do some research now, with help of the CMIP6 dataset. We will look how our model fares with historical CMIP data and their climate models that predict future scenarios. I have setup some possible climate change models for you to use, you have to fill in your camel ID and think of a research question!

We like the sentence:

To understand [environment issue] in [region] we will study te impact of [verb/noun] on [hydrological variable].

But feel free to come up with your own! Some hints:

Maybe look at a smaller time period.

What do certain climate changes do to your region.

In This Notebook: Model runs with CMIP Forcing#

In this notebook we will do the model runs for the chosen areas with the generated forcing from the previous notebook. This will be used to do our research with!

Importing and setting up#

# Ignore user warnings :)

import warnings

warnings.filterwarnings("ignore", category=UserWarning)

# Required dependencies

import ewatercycle.forcing

import ewatercycle.observation.grdc

import ewatercycle.analysis

from pathlib import Path

from cartopy.io import shapereader

import pandas as pd

import numpy as np

from rich import print

import xarray as xr

import shutil

import json

import ewatercycle

import ewatercycle.models

from util_functions import *

You can change the folder structure as well, but note that you will have to change that in upcoming notebooks as well!

# Load settings

# Read from the JSON file

with open("settings.json", "r") as json_file:

settings = json.load(json_file)

camel_id = settings["caravan_id"]

historical_start = settings["calibration_start_date"]

historical_end = settings["calibration_end_date"]

future_start_data = settings["future_start_date"]

future_end_data = settings["future_end_date"]

Loading in the CMIP forcings#

# Load the generated historical data

historical_location = Path(settings["path_CMIP6"]) / "historical" / "work" / "diagnostic" / "script"

historical = ewatercycle.forcing.sources["LumpedMakkinkForcing"].load(directory=historical_location)

# Load the generated SSP126 data

ssp126_location = Path(settings["path_CMIP6"]) / "SSP126" / "work" / "diagnostic" / "script"

SSP126 = ewatercycle.forcing.sources["LumpedMakkinkForcing"].load(directory=ssp126_location)

# Load the generated SSP245 data

ssp245_location = Path(settings["path_CMIP6"]) / "SSP245" / "work" / "diagnostic" / "script"

SSP245 = ewatercycle.forcing.sources["LumpedMakkinkForcing"].load(directory=ssp245_location)

# Load the generated SSP585 data

ssp585_location = Path(settings["path_CMIP6"]) / "SSP585" / "work" / "diagnostic" / "script"

SSP585 = ewatercycle.forcing.sources["LumpedMakkinkForcing"].load(directory=ssp585_location)

# This additional steo is needed because ERA5 forcing data is stored deep in a sub-directory

era5_location = Path(settings['path_ERA5']) / "work" / "diagnostic" / "script"

ERA5_forcing_object = ewatercycle.forcing.sources["LumpedMakkinkForcing"].load(directory=era5_location)

print(settings)

{ 'country': 'Zimbabwe', 'caravan_id': 'AF_1491830.0', 'calibration_start_date': '1961-10-01T00:00:00Z', 'calibration_end_date': '1978-11-21T00:00:00Z', 'future_start_date': '2029-08-01T00:00:00Z', 'future_end_date': '2049-08-31T00:00:00Z', 'base_path': '/home/mmelotto/my_data/workshop_africa', 'central_data_path': '/data/shared/climate-data/camels_africa/zimbabwe/camel_data', 'path_shape': '/data/shared/climate-data/camels_africa/zimbabwe/camel_data/shapefiles/AF_1491830/AF_1491830.shp', 'path_caravan': '/home/mmelotto/my_data/workshop_africa/forcing_data/AF_1491830.0/caravan', 'path_ERA5': '/home/mmelotto/my_data/workshop_africa/forcing_data/AF_1491830.0/ERA5', 'path_CMIP6': '/home/mmelotto/my_data/workshop_africa/forcing_data/AF_1491830.0/CMIP6', 'path_output': '/home/mmelotto/my_data/workshop_africa/output_data/AF_1491830.0', 'hbv_parameters': [10.0, 0.87, 592.0, 1.4, 0.3, 1.0, 0.09, 0.01, 0.001], 'SCE_calibration_parameters': [ 6.586012553845907, 1.0, 557.8536779504233, 2.939688843608312, 0.001, 1.0, 0.1, 0.00024827990873074545, 3.2087728977063117 ], 'monte_carlo_calibration_parameters': [ 2.2809924457194377, 0.8237298558744328, 464.7470066408867, 1.5984658697300798, 0.29785129445020814, 1.1825488171931156, 0.07487754017358864, 0.008908532718571173, 5.605800944680686 ] }

Using the parameters we found earlier:

hbv_parameters: the one you found manually

monte_carlo_calibration_parameters: parameters based on monte carlo

SCE_calibration_parameters: parameters based on SCE

parameters_found = settings["SCE_calibration_parameters"]

# Si, Su, Sf, Ss, Sp

s_0 = np.array([0, 100, 0, 5, 0])

Setting up the models#

I took this bit from our Bachelor student Ischa, so thank you very much!

We put the forcings in a list and then we will use that to look through the models we created, using the BMI implementation.

NOTE: we use HBVLocal here to speed up the process. This is a model that just runs locally, without the setup of containers, which is proportionally heavier than the running of the actual model.

forcing_list_historic = [historical, ERA5_forcing_object]

name_list = ["historical CMIP", "ERA5"]

output_historic = []

years = []

# Create model object, notice the forcing object.

model_output_historic = dict()

for idx, forcings in enumerate(forcing_list_historic):

model = ewatercycle.models.HBVLocal(forcing=forcings)

config_file, _ = model.setup(

parameters=parameters_found,

initial_storage=s_0,

# cfg_dir = settings[""],

)

model.initialize(config_file)

Q_m = []

time = []

while model.time < model.end_time:

model.update()

Q_m.append(model.get_value("Q")[0])

time.append(pd.Timestamp(model.time_as_datetime))

output_historic.append(Q_m)

years.append(time)

model_output_historic[f"{name_list[idx]}"] = pd.Series(data=Q_m, name="modelled discharge, forcing: " + f"{name_list[idx]}", index=time)

model.finalize()

forcing_list_future = [SSP126, SSP245, SSP585]

name_list = ["SSP126", "SSP245", "SSP585"]

output_future = []

years_future = []

# Create model object, notice the forcing object.

model_output_future=dict()

for idx, forcings in enumerate(forcing_list_future):

model = ewatercycle.models.HBVLocal(forcing=forcings)

config_file, _ = model.setup(

parameters=parameters_found,

initial_storage=s_0,

# cfg_dir = settings[""],

)

model.initialize(config_file)

Q_m = []

time = []

while model.time < model.end_time:

model.update()

Q_m.append(model.get_value("Q")[0])

time.append(pd.Timestamp(model.time_as_datetime))

output_future.append(Q_m)

years_future.append(time)

model_output_future[f"{name_list[idx]}"] = pd.Series(data=Q_m, name="modelled discharge, forcing: " + f"{name_list[idx]}", index=time)

model.finalize()

Checking our results#

Finally, we look at the output and we use standard python libraries to visualize the results. We put the model output into a pandas Series to make plotting easier.

SSP126_output = pd.Series(data=output_future[0], name="SSP126", index=years_future[0])

SSP245_output = pd.Series(data=output_future[1], name="SSP245", index=years_future[1])

SSP585_output = pd.Series(data=output_future[2], name="SSP585", index=years_future[2])

historical_output = pd.Series(data=output_historic[0], name="Historical", index=years[0])

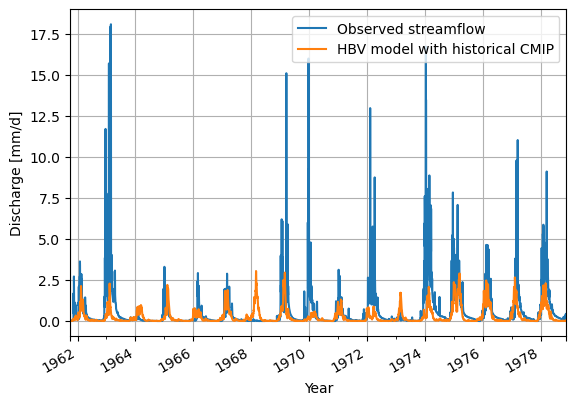

Looking at the historical data modelling first.

caravan_data_object = create_forcing_data_HBV_from_nc(settings["calibration_start_date"], settings["calibration_end_date"], settings["caravan_id"], settings["country"])

# Create a dataframe for the observations

ds_observation = xr.open_mfdataset([caravan_data_object['Q']]).to_pandas()

ds_observation = ds_observation['Q']

ds_observation.plot(label="Observed streamflow")

historical_output.plot(label="HBV model with historical CMIP")

plt.legend()

plt.grid()

plt.ylabel("Discharge [mm/d]")

plt.xlabel("Year")

plt.show()

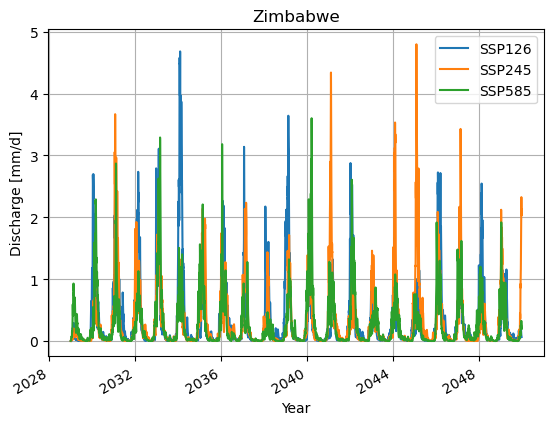

Now for the visualising you will look at the model outputs, which is, as you will see, pointless for this many years.

SSP126_output.plot()

SSP245_output.plot()

SSP585_output.plot()

plt.legend()

plt.grid()

plt.title(f"{settings["country"]}")

plt.ylabel("Discharge [mm/d]")

plt.xlabel("Year")

plt.show()

Done with the model runs#

Now go to the next Notebook to do some research!

But first let us save all the results (including ERA5):

caravan_data_object = caravan_data_object

caravan_discharge_observation = xr.open_mfdataset([caravan_data_object['Q']])

caravan_discharge_observation = caravan_discharge_observation.rename_vars({'Q':'Observed Q Caravan'})

# We want to also be able to use the output of this model run in different analyses. Therefore, we save it as a NetCDF file.

xr_model_output_historic = xr.merge([model_output_per_model.to_xarray() for name, model_output_per_model in model_output_historic.items()])

xr_model_output_historic = xr_model_output_historic.rename({'index': 'time'})

xr_model_output_historic.attrs['units'] = 'mm/d'

print(xr_model_output_historic)

<xarray.Dataset> Size: 158kB Dimensions: (time: 6573) Coordinates: * time (time) datetime64[ns] 53kB 1961-... Data variables: modelled discharge, forcing: historical (time) float64 53kB 0.001241 ...... modelled discharge, forcing: ERA5 (time) float64 53kB 0.001241 ...... Attributes: units: mm/d

# Saving the data for the next Notebook

# Interpolate model data to match the timestamps of the observations

xr_model_output_interp = xr_model_output_historic.interp(time=caravan_discharge_observation.time)

# Merge the interpolated model output with observations

xr_merged_historic = xr.merge([xr_model_output_interp, caravan_discharge_observation[['Observed Q Caravan']]])

print(xr_merged_historic)

<xarray.Dataset> Size: 175kB Dimensions: (time: 6261) Coordinates: * time (time) datetime64[ns] 50kB 1961-... Data variables: modelled discharge, forcing: historical (time) float64 50kB 0.003266 ...... modelled discharge, forcing: ERA5 (time) float64 50kB 0.001833 ...... Observed Q Caravan (time) float32 25kB dask.array<chunksize=(6261,), meta=np.ndarray> Attributes: units: mm/d

# Save the xarray Dataset to a NetCDF file

xr_merged_historic.to_netcdf(Path(settings['path_output']) / (settings['caravan_id'] + '_historic_output.nc'))

We will save the CMIP runs separately.

xr_model_output_future = xr.merge([model_output_per_model.to_xarray() for name, model_output_per_model in model_output_future.items()])

xr_model_output_future = xr_model_output_future.rename({'index': 'time'})

xr_model_output_future.attrs['units'] = 'mm/d'

# Save the xarray Dataset to a NetCDF file

xr_model_output_future.to_netcdf(Path(settings['path_output']) / (settings['caravan_id'] + '_future_output.nc'))

# Remove all temporary directories made by the optimization algo.

!rm -rf hbvlocal_*